This is the third post in a series exploring the evidence behind learning design decisions. The first post examined why the tell-and-test format underperforms and what retrieval practice research tells us about retention. The second post looked at scenario-based design and productive failure. This post takes the opposite direction: what does the research say when it argues that active learning might not be worth the effort?

If you have read the first two posts in this series, you could be forgiven for thinking the debate is settled. Active learning beats passive delivery. Retrieval practice outperforms re-reading. Scenarios outperform slides. Build interactive e-learning, and good outcomes will follow.

The evidence does point that way. But there is a serious counter-tradition in learning research — one that has been running for over forty years — that complicates the picture in ways that are genuinely useful for anyone designing e-learning. Engaging with the counterarguments honestly makes for better design decisions than simply citing the meta-analyses that support what we already want to believe.

The great media debate

In 1983, educational psychologist Richard Clark published a paper in the Review of Educational Research that has shaped the field ever since. His argument was blunt: media are mere delivery vehicles for instruction and do not influence learning. The analogy he used — that a delivery truck does not affect the nutritional value of the groceries it carries — became one of the most quoted lines in educational technology.

Clark's claim was based on decades of media comparison studies. Researchers had compared film to lectures, television to textbooks, computer-based training to classroom instruction. The consistent finding was what became known as the "no significant difference" phenomenon: when the instructional method was held constant, switching the delivery medium produced no meaningful change in outcomes.

Robert Kozma responded in 1994, arguing that the question should not be whether media do influence learning, but whether they will when properly designed. He proposed that certain media afford certain cognitive processes — that a simulation can support mental model-building in ways a textbook cannot, not because simulations are inherently better, but because they enable different kinds of interaction with the material.

The debate never fully resolved. But Clark's core position has proven remarkably durable. Even his critics have largely shifted from arguing that media directly cause learning to arguing that media enable certain methods that cause learning — which is a more nuanced version of Clark's original point. The truck does not change the nutrition. But some trucks have refrigeration, which lets you carry cargo that would spoil otherwise.

What "no significant difference" actually means

The no significant difference finding is sometimes treated as proof that all formats are equally effective, and therefore we should choose whatever is cheapest. This is a misreading — and an important one to correct.

What the studies consistently show is that when you take the same instructional method and deliver it through different media, outcomes do not change. A recorded lecture produces similar results to a live lecture. A PDF of slides produces similar results to clicking through those slides online. This is not surprising. The method is doing the work. The medium is carrying it.

But here is the point that matters for e-learning design: the no significant difference finding does not say that all methods are equal. It says that all media are equal when the method is held constant. The Freeman meta-analysis, the testing effect literature, and the ICAP framework all demonstrate substantial differences between methods. Active retrieval outperforms passive re-reading. Constructive engagement outperforms clicking. Scenario-based practice outperforms information presentation.

The practical implication is precise and worth stating clearly. If you take a slide deck, record a narrator reading it, and publish it as an e-learning module, you have changed the medium without changing the method. Clark's research predicts — correctly — that this will produce no improvement. If you instead redesign the content to include embedded retrieval questions, decision points, and spaced practice, you have changed the method. The evidence predicts — also correctly — that this will produce measurable gains.

The medium is not the message. The method is.

The case for passive learning — honestly stated

There are legitimate arguments for passive delivery that deserve more than dismissal.

First, passive does not necessarily mean cognitively idle. A learner reading a well-structured text while actively thinking about how it applies to their work is engaging in deep processing — even though they are not clicking, dragging, or selecting anything. Research on generative processing suggests that what matters is internal cognitive activity, not visible behavioural activity. A learner can be overtly passive while experiencing high levels of cognitive engagement.

Second, for straightforward factual content — definitions, procedures, regulatory requirements, reference material — direct instruction is efficient and effective. The testing effect literature shows that retrieval practice helps retention, but the material being retrieved still needs to be clearly presented in the first place. No amount of interactivity compensates for unclear or disorganised content. A well-written reference document that people actually consult when they need it may do more than an interactive module they complete once and never revisit.

Third, there is the pragmatic argument. Interactive e-learning takes longer to design, longer to build, and longer to maintain. If the content changes frequently — as compliance regulations or product specifications often do — the maintenance burden of branching scenarios and embedded interactions can become unsustainable. A simpler format that gets updated promptly may serve learners better than an elaborate interactive module that sits unchanged for two years after a regulation changes.

These are not arguments against active learning. They are arguments for matching the design investment to the learning need.

Where the evidence genuinely conflicts

There are areas where the research is less settled than advocates on either side tend to acknowledge.

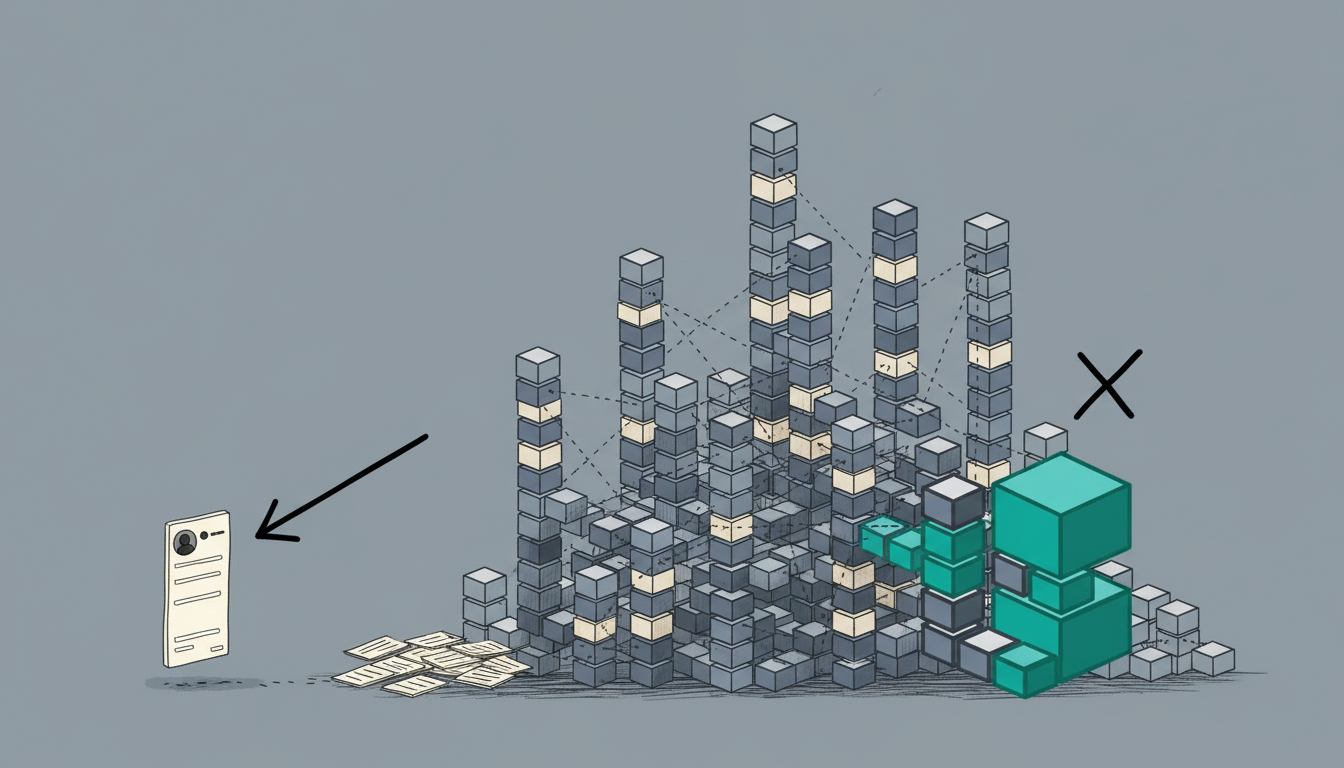

Interactivity can hurt. Several studies in multimedia learning have found that adding interactive elements to instructional materials can decrease learning outcomes compared to simpler formats. The mechanism is cognitive load: when the interaction itself is complex or unfamiliar, working memory resources that should be processing the content are instead consumed by figuring out the interface. This is the paradox at the heart of much e-learning: the features meant to increase engagement can reduce learning if they are not carefully designed. A drag-and-drop exercise that is mechanically fiddly, a branching scenario where the navigation is confusing, a simulation where the controls are unintuitive — all of these add extraneous cognitive load that competes with the instructional content.

Learners prefer what works less well. The Deslauriers et al. (2019) finding, mentioned in the first post of this series, bears repeating. Students who learned through active methods performed significantly better on assessments but reported lower satisfaction and lower perceived learning than students who attended polished lectures. Feelings of fluency — the sense that material is easy and clear — are not reliable indicators of durable learning. This creates a genuine tension for e-learning designers: the formats that produce the best feedback scores are often not the ones that produce the best retention. In organisations where course ratings drive decisions, this tension is structural.

Effect sizes are real but modest. Even in the most favourable meta-analyses, the effect sizes for active learning strategies range from about 0.15 to 0.50 standard deviations. An effect size of 0.33 — the finding from a recent 2025 meta-analysis on active strategies in video-based learning — means the average active learner performs at about the 63rd percentile of the passive group. This is meaningful, especially at scale. But it is not transformative. It does not mean that passive formats fail entirely or that active formats guarantee success. It means that, on average, across many studies, active approaches produce a moderate improvement. Whether that improvement justifies the additional development cost depends on context: the stakes of the learning, the size of the audience, and the longevity of the content.

What this actually means for design decisions

The research, taken as a whole, does not support either extreme. It does not support the claim that all e-learning should be interactive and scenario-based regardless of content or context. And it does not support the claim that passive delivery is just as effective and we should save the budget.

What it supports is a more honest set of design questions.

What kind of learning does this content require? If learners need to recall facts or follow procedures, direct instruction with spaced retrieval practice is efficient and well-supported. If learners need to exercise judgement, navigate ambiguity, or apply principles in unfamiliar situations, scenario-based and constructive approaches earn their development cost. Matching the method to the learning goal — not applying the same template to everything — is where the evidence points.

Is the interactivity doing cognitive work, or cosmetic work? A click-to-reveal panel and a retrieval question both count as "interactive" in an authoring tool. They are not equivalent. The question is whether the learner is being asked to think — to retrieve, to decide, to generate — or merely to perform a motor action to advance the content. If the interaction could be replaced by a page turn without any loss of cognitive engagement, it is not earning its place.

What is the realistic maintenance window? A branching scenario that is accurate and current teaches more than a slide deck. A branching scenario that has not been updated since the regulation changed teaches less than nothing. Design sophistication needs to be matched with realistic update commitments. For rapidly changing content, a simpler format that stays current may genuinely serve learners better.

What are the actual stakes of failure? For a compliance refresher where the real goal is documentation, the development investment in rich interactivity may not be justified. For training where poor performance has safety, financial, or reputational consequences — onboarding in regulated industries, customer-facing communication, clinical decision-making — the moderate effect size of active learning, applied across thousands of learners, translates to meaningful organisational impact.

The honest summary

Active learning works. The evidence is consistent, replicated across hundreds of studies, and the effect sizes are meaningful. Retrieval practice is one of the most reliably demonstrated findings in all of cognitive psychology. Scenario-based learning outperforms information delivery for applied skills. The ICAP hierarchy — Interactive over Constructive over Active over Passive — holds up across contexts.

But "active learning works" is not the same as "every e-learning module should be interactive." The gains are moderate, not magical. Poorly designed interactivity can be worse than well-designed passive delivery. The development cost is real. And for some learning goals, straightforward direct instruction is the right answer.

The useful question is not whether active involvement is better in the abstract. It is where, specifically, the evidence says the extra investment produces returns that justify it — and where it does not. The research gives us enough to answer that question with reasonable precision, if we are willing to read all of it rather than just the parts that confirm what we were already planning to build.

Sources: Clark (1983, 1994); Kozma (1991, 1994); Freeman, Eddy, McDonough, Smith et al. (2014); Roediger & Karpicke (2006); Chi & Wylie (2014); Deslauriers, McCarty, Miller, Callaghan & Kestin (2019); Means, Toyama, Murphy, Bakia & Jones (2009/2013); Adesope, Trevisan & Sundararajan (2017); Meta-analysis on active learning strategies in video learning (2025, Learning and Instruction).